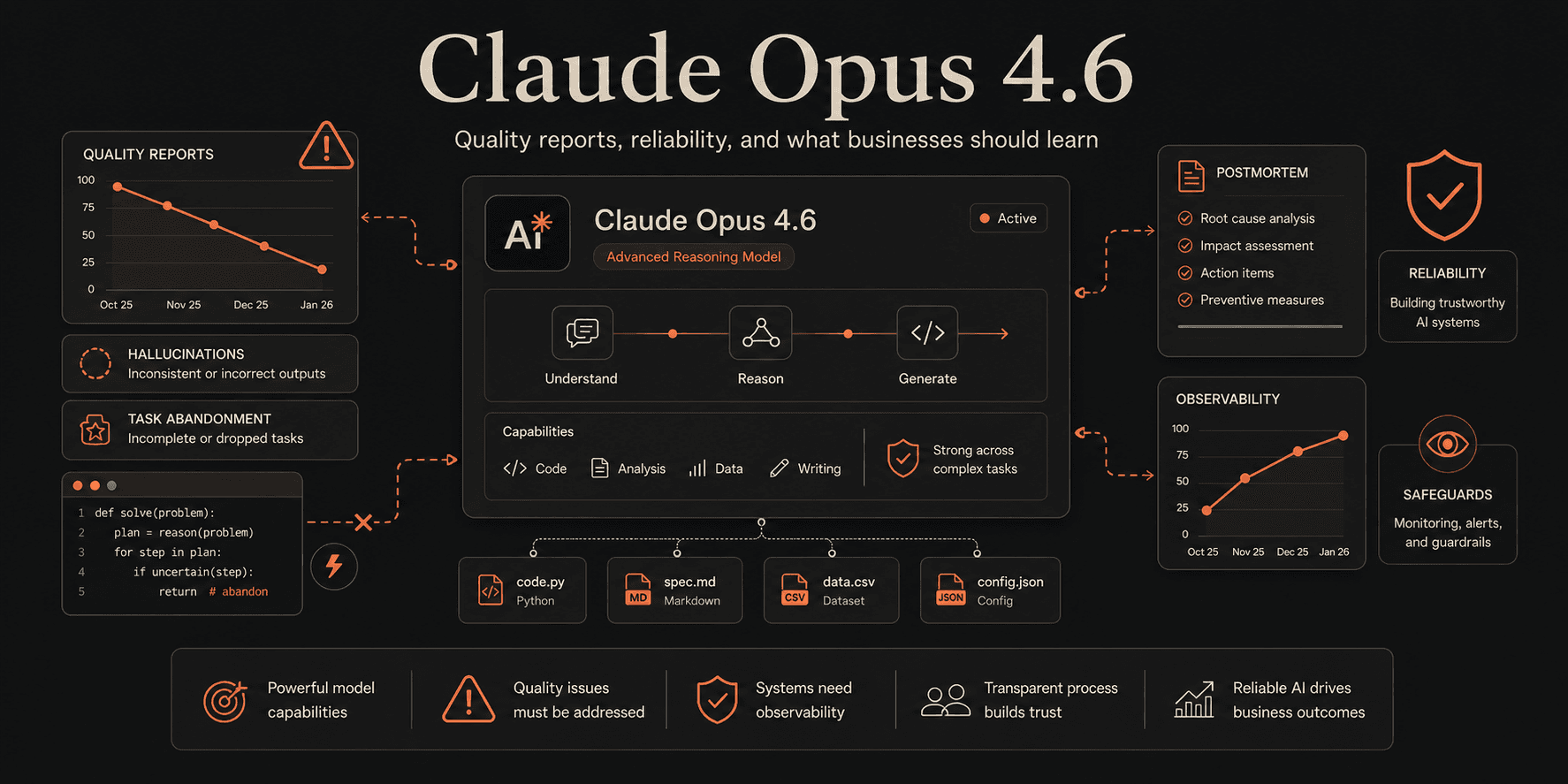

What the Claude Opus 4.6 Quality Controversy Actually Shows

A measured look at the Claude Opus 4.6 and Claude Code quality reports, what users claimed changed, what Anthropic acknowledged, and what businesses should learn from it.

Not every model controversy is a scandal

In early 2026, Claude Opus 4.6 became the center of a very specific kind of AI debate.

The issue was not that the model suddenly became useless. It was not that Anthropic publicly admitted to weakening the model. It was not even that every user experienced the same thing.

The controversy came from a growing number of developers and Claude Code users reporting that the product felt less reliable for complex coding workflows. Users described weaker reasoning, shorter follow-through, more hallucinated assumptions, less careful file inspection, and a tendency to stop before the task was actually complete.

That matters because Claude Opus 4.6 was launched as a high-end model for complex reasoning, long-context work, and agentic coding. When a model is positioned as strong at planning, tool use, and long-horizon software tasks, even a small perceived drop in consistency becomes highly visible to power users.

The better word for this moment is probably not “scandal.”

It was a quality controversy.

And for businesses building with AI, it is worth studying carefully.

What users said changed

The loudest reports came from developers using Claude Code for serious engineering workflows.

The complaints were not just “the model feels worse.” Many users described specific behavioral changes:

- editing files before reading enough surrounding context

- making confident assumptions instead of verifying them

- abandoning tasks early

- asking for permission instead of continuing work it had already started

- producing more shallow fixes

- missing project-specific conventions

- burning more tokens while delivering less useful output

- becoming less dependable during long coding sessions

For casual prompting, some of these differences might be hard to notice.

For software engineering, they are obvious.

A coding agent that reads fewer files before making edits can still produce code. The problem is that the code may fit the immediate instruction while missing the system around it. That is how small mistakes become expensive review cycles.

The “73% thinking depth” claim

One of the most widely discussed pieces of evidence came from a GitHub issue analyzing thousands of Claude Code session files.

The analysis claimed that estimated median thinking depth dropped from roughly 2,200 characters to around 600 characters, which the author described as a 73% decline. The same discussion claimed that the model appeared to read fewer files before editing, shifting from a more research-first behavior toward a more edit-first behavior.

That claim is important, but it needs to be handled carefully.

It was not Anthropic’s official conclusion. It was a user-submitted forensic analysis based on available session data, assumptions about visible thinking signals, and observed behavior across a specific engineering environment.

Still, the reason it resonated is obvious: it matched what many developers felt in practice.

The model did not just seem different. It seemed different in a way that could be explained by reduced deliberation.

Important distinction

The 73% figure should be treated as a reported analysis from user-submitted GitHub data, not as an official Anthropic metric. The useful takeaway is not the exact number. It is the pattern: users believed the model was taking more shortcuts during complex engineering work.

What Anthropic acknowledged

Anthropic responded with an official engineering postmortem on April 23, 2026.

The company said it took the quality reports seriously, did not intentionally degrade the models, and confirmed that the API and inference layer were unaffected. Anthropic also said it identified three different issues affecting Claude Code quality and that all three were resolved as of April 20 in version 2.1.116.

That response matters because it changes the framing.

This was not simply a case of users imagining a decline. Anthropic acknowledged there were quality issues worth investigating and fixing.

At the same time, Anthropic did not frame the situation as a deliberate nerfing of Claude Opus 4.6 itself. Their explanation focused on Claude Code quality reports, product-level issues, and fixes to the development environment around the model.

That distinction is important for anyone writing or thinking about this event.

The product experience can degrade even if the underlying model is not intentionally weakened.

Model quality and product quality are not the same thing

One of the biggest lessons from this controversy is that users do not experience a model in isolation.

They experience a product.

A developer using Claude Code is not only interacting with Claude Opus 4.6. They are interacting with:

- the model

- the editor or coding interface

- tool-calling behavior

- context management

- file-reading strategy

- hidden system prompts

- rate limits

- thinking or reasoning controls

- safety layers

- agentic execution rules

- UI changes

- infrastructure decisions

If any of those layers changes, the user may feel like the “model got worse.”

That does not mean the model weights changed. It means the system changed.

For businesses, this is a very practical point. If you build an AI workflow into your operations, the output quality depends on more than the model name in the dropdown.

A strong model inside a poorly controlled workflow can still fail.

Why developers noticed it so quickly

Developers are unusually sensitive to AI quality regression because coding tasks are full of hidden dependencies.

When an AI assistant writes marketing copy, a small drop in reasoning may produce something slightly more generic.

When an AI coding agent edits a production codebase, a small drop in reasoning can produce broken behavior, missed conventions, bad assumptions, or changes that look correct until they collide with the rest of the system.

Complex coding work requires the assistant to:

- inspect the right files

- understand naming patterns

- follow project conventions

- reason through dependencies

- avoid over-editing

- run or suggest the right checks

- know when not to change something

- continue until the task is actually complete

That is why “thinking depth” became such a central phrase in the debate.

For simple tasks, shallow reasoning may be fine.

For senior engineering workflows, shallow reasoning can become the difference between a helpful assistant and an expensive cleanup problem.

The issue was also about trust

The frustration around Claude Opus 4.6 was not only about performance.

It was about trust.

Users were paying for a premium AI product and building workflows around a certain level of capability. When the experience changed, many felt they had no clear visibility into what had changed, whether the issue was temporary, or how to adjust their usage.

That is a problem for every AI company, not just Anthropic.

As AI tools become part of real work, users need more than benchmark announcements. They need operational clarity.

They need to know when behavior changes, when product updates may affect workflows, and how to monitor whether the system is still performing at the level they expect.

AI products are becoming infrastructure.

Infrastructure needs transparency.

What this teaches businesses using AI agents

The Claude Opus 4.6 controversy is a useful warning for businesses adopting AI workflows.

The lesson is not “do not use Claude.”

The lesson is not “do not use AI agents.”

The lesson is that AI workflows need to be designed like systems, not treated like magic.

If an AI assistant is helping with important work, you need a way to detect when output quality changes.

That could mean:

- keeping human review on high-impact tasks

- logging AI decisions and outputs

- tracking failure patterns

- comparing output quality over time

- using approval steps before irreversible actions

- limiting agent permissions

- separating staging and production access

- testing model updates before rolling them into critical workflows

- designing fallbacks when a model becomes unreliable

The more important the workflow, the more structure it needs.

AI reliability is a systems problem

The model matters, but the surrounding workflow matters just as much. Permissions, logging, review steps, fallback paths, and evaluation loops are what turn AI from a helpful tool into a dependable business system.

Why “it passed the benchmark” is not enough

Claude Opus 4.6 launched with strong claims around reasoning, coding, long-context retrieval, agentic workflows, and complex task execution. Those benchmark results and partner quotes still matter.

But benchmark performance does not guarantee that every real-world workflow will feel stable forever.

A model can perform well on public evaluations and still frustrate users in long, messy, highly customized environments.

That gap is important.

Real work is not a benchmark. Real work has inconsistent files, legacy decisions, unclear instructions, partial context, human preferences, deadlines, and edge cases that never appear in a clean evaluation set.

This is why businesses should not choose AI tools based only on headline model rankings.

They should evaluate the model inside their actual workflow.

The right response is not panic

It would be easy to turn this event into a dramatic story about one AI company failing.

That would miss the point.

Anthropic remains one of the most important companies in frontier AI. Claude remains a serious product family with strong capabilities. Opus 4.6 was launched with ambitious claims because it is designed for ambitious work.

But ambitious AI products create ambitious expectations.

When developers use a model for complex software work, they notice subtle changes. When those changes affect productivity, they escalate quickly. When enough power users report the same pattern, the product team has to investigate.

That is what happened here.

The useful takeaway is not that Claude is bad.

The useful takeaway is that AI performance needs to be observable, testable, and governed when it becomes part of serious work.

What companies should do before relying on any AI model

The safest way to use AI in business is to assume that model behavior can change.

Not because companies are careless, but because these systems are constantly evolving.

Models get updated. Product layers change. Context handling changes. safety rules change. Tool behavior changes. Pricing and rate limits change. Infrastructure changes.

So before a business relies on any model for important workflows, it should answer a few questions.

What happens if the model gets worse for a week?

If the answer is “nothing major,” the workflow is low-risk.

If the answer is “we lose customers, ship bad code, send wrong information, or break operations,” the workflow needs safeguards.

Can we measure output quality?

A team should know whether the AI is helping or creating hidden cleanup work.

That might require simple internal checks like acceptance rates, edit distance, retry count, human corrections, escalation frequency, or time saved after review.

Can a human approve high-impact actions?

AI should not have unrestricted authority over sensitive business operations.

Deleting data, sending customer communications, changing production code, updating financial records, or making irreversible decisions should require explicit controls.

Can we switch models or workflows if needed?

Businesses should avoid designing themselves into a single fragile dependency.

The workflow should be structured enough that a model can be swapped, adjusted, or downgraded without breaking the entire process.

The real story

The Claude Opus 4.6 quality controversy is not just a story about one model release.

It is a story about the stage AI products have entered.

Users are no longer treating frontier models as toys. They are putting them inside real engineering workflows, real business processes, and real operational systems.

That raises the standard.

A model cannot just be impressive in a demo. It has to be consistent. It has to be observable. It has to fail safely. It has to give users enough control to trust it inside important work.

That is the real lesson.

The future of AI adoption will not be won only by the smartest model.

It will be won by the most reliable systems around the model.

Final thought

Claude Opus 4.6 did not need to become a culture-war-style “AI scandal” to be worth discussing.

The more useful framing is simpler.

A highly capable AI product appeared to deliver a degraded experience for a meaningful group of power users. Those users documented what they saw. Anthropic investigated, published a postmortem, and said the identified Claude Code issues were fixed.

That is a serious product moment.

For companies building AI workflows, it is also a reminder: do not build critical operations on vibes.

Build with measurement, controls, review loops, and fallback plans.

The model is only one part of the system.

Want help turning ideas like this into product, design, or growth systems?

Talk with Avalency about landing pages, product design, development, and AI workflows.